Date: Sat, 9 Dec 2017 11:35:18 -0800

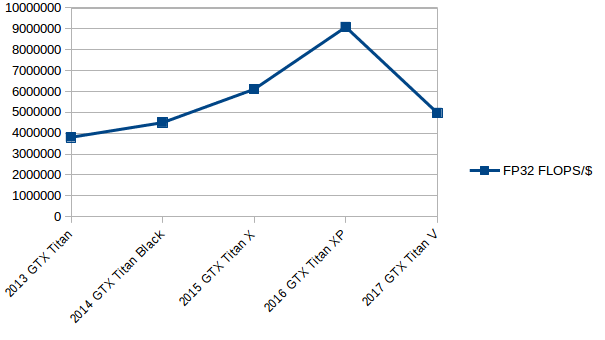

The GTX Titan V is an overpriced joke for anything but single GPU deep

learning unless you're sufficiently Jedi to understand gradient compression

tricks because it has zero zip nada null NVLINK support. I'd say do not

buy one, but I know some of you guys see this as the GPU equivalent of

buying a $300,000 Mc Laren instead of a $70,00 corvette: sure it's

marginally faster than the corvette, but the !/$ is atrocious, a regression

back to 2014 in fact. And let's face it, you're never going to own a

McLaren, but you could stretch and buy a GTX Titan V to live the dream.

Personally, I'm waiting for the sub $1000 variant with the (for the

purposes of anything but substandard image processing and derp) useless

tensor cores deactivated. Can someone send a T-1000 back in time to

convince Geoffrey Hinton to become a carpenter instead of a computer

scientist? Thanks...

On Fri, Dec 8, 2017 at 11:34 AM, Dow Hurst <dphurst.uncg.edu> wrote:

> I think the new Nvidia Titan V just announced

> <https://arstechnica.com/gadgets/2017/12/nvidia-brings-

> its-monster-volta-gpu-to-a-graphics-cards-and-it-costs-3000/>

> would have some positive impact on the possibility of the development of

> Amber on GPUs for the Volta architecture. Seems that a $3K card, even

> though expensive, is much more in reach than the original $10K card. The

> price will drop as time passes making it even more accessible. What kind of

> improvements to Amber could be made within the scope of the Titan V? I

> understand it won't have any SLI connections and is still focused on

> computation rather than gaming. Would Scott Le Grand's comment still hold

> true?

>

> "*What NVIDIA will miss out on is beating Anton with one or two DGX-1Vs.

> That's something I think could be achieved in a matter of months, but it

> requires rewriting the inner loops from the ground up to parallelize

> everything across multiple GPUs (especially the FFTs), switching to one

> process per server with thread per GPU (Volta is the first GPU where how

> one invokes things matters), and atomic synchronization between kernels

> instead of returning to the CPU*."

>

> Sincerely,

> Dow

> ⚛Dow Hurst, Research Scientist

> 340 Sullivan Science Bldg.

> Dept. of Chem. and Biochem.

> University of North Carolina at Greensboro

> PO Box 26170 Greensboro, NC 27402-6170

>

>

> On Mon, Oct 30, 2017 at 2:57 PM, Ross Walker <ross.rosswalker.co.uk>

> wrote:

>

> > Dear All,

> >

> > In the spirit of open discussion I want to bring the AMBER community's

> > attention to a concern raised in two recent news articles:

> >

> > 1) "Nvidia halts distribution partners from selling GeForce graphics

> cards

> > to server, HPC sectors" - http://www.digitimes.com/news/

> > a20171027PD200.html

> >

> > 2) "Nvidia is cracking down on servers powered by Geforce graphics cards"

> > - https://www.pcgamesn.com/nvidia-geforce-server

> >

> > I know many of you have benefitted greatly over the years from the GPU

> > revolution that has transformed the field of Molecular Dynamics. A lot of

> > the work in this field was provided by people volunteering their time and

> > grew out of the idea that many of us could not have access to or could

> not

> > afford supercomputers for MD. The underlying drive was to bring

> > supercomputing performance to the 99% and thus greatly extend the amount

> > and quality of science each of us could do. For AMBER this meant

> supporting

> > all three models of NVIDIA graphics card, Geforce, Quadro and Tesla in

> > whatever format or combination, you the scientist and customer, wanted.

> >

> > In my opinion key to AMBER's success was the idea that, for running MD

> > simulations, very few people in the academic field, and indeed many R&D

> > groups within companies, small or large, could afford the high end tesla

> > systems, whose price has been steadily going up substantially above

> > inflation with each new generation (for example the $149K DGX-1). The

> > understanding, both mine and that of the field in general, has

> essentially

> > always been that assuming one was willing to accept the risks on

> > reliability etc, use of Geforce cards should be perfectly reasonable. We

> > are not after all running US air traffic control, or some other equally

> > critical system. It is prudent use of limited R&D funds, or in many cases

> > tax payer money and we are the customers after all so should be free to

> > choose the hardware we buy. NVIDIA has fought a number of us for many

> years

> > on this front but mostly in a passive aggressive stance with the

> occasional

> > personal insult or threat. As highlighted in the above articles with the

> > latest AI bubble they have cemented a worrying monopoly and are now

> getting

> > substantially more aggressive, using this monopoly to pressure suppliers

> to

> > try to effectively ban the use of Geforce cards for scientific compute

> and

> > restrict what we can buy to Tesla cards, that for the vast majority of us

> > are simply out of our price range.

> >

> > In my opinion this a very worrying trend that could hurt us all and have

> > serious repercussions on all of our scientific productivities and the

> field

> > in general. If this is a concern to you too I would encourage each of you

> > to speak up. Contact people you know at NVIDIA and make your concerns

> > heard. I am concerned that if we as a community do not speak up now we

> > could see our field be completely priced out of the ability to make use

> of

> > GPUs for MD over the next year.

> >

> > All the best

> > Ross

> >

> >

> >

> > _______________________________________________

> > AMBER mailing list

> > AMBER.ambermd.org

> > http://lists.ambermd.org/mailman/listinfo/amber

> >

> _______________________________________________

> AMBER mailing list

> AMBER.ambermd.org

> http://lists.ambermd.org/mailman/listinfo/amber

>

_______________________________________________

AMBER mailing list

AMBER.ambermd.org

http://lists.ambermd.org/mailman/listinfo/amber

(image/png attachment: sad2014.png)